Juan Carlos Niebles

Research Director, Salesforce AI Research

Co-Director, Stanford Vision and Learning Lab

Adjunct Professor, CS Dept., Stanford University

bio

Juan Carlos Niebles is a Research Director at Salesforce and an Adjunct Professor of Computer Science at Stanford University, where he serves as co-Director of the Stanford Vision and Learning Lab. His research focuses on the intersection of computer vision, machine learning, multimodal AI, and autonomous agents.

With over 100 published articles in top-tier venues, Juan Carlos is a recognized leader in the global AI community. His career spans significant industry-academic leadership, including roles as Associate Director of Research for the Stanford-Toyota Center for AI Research, and Senior Research Scientist at the Stanford AI Lab. He also held a long-term professorship at Universidad del Norte in Colombia.

Beyond research, he helps shape the AI ecosystem as a member of the AI Index Steering Committee, Curriculum Director for Stanford-AI4ALL, and as an Area Chair for CVPR, ICCV, and ECCV. He also served as an Associate Editor for IEEE TPAMI. His contributions have been recognized with the Microsoft Research Faculty Fellowship, several Google Research Awards, and a Fulbright Fellowship. In 2025, he was named one of the Top 100 most prominent leaders in AI in Colombia and was previously a Forty Under Forty recipient.

He holds a Ph.D. degree in Electrical Engineering from Princeton University, an M.Sc. from the University of Illinois at Urbana-Champaign, and an Electronics Engineering degree from Universidad del Norte.

research

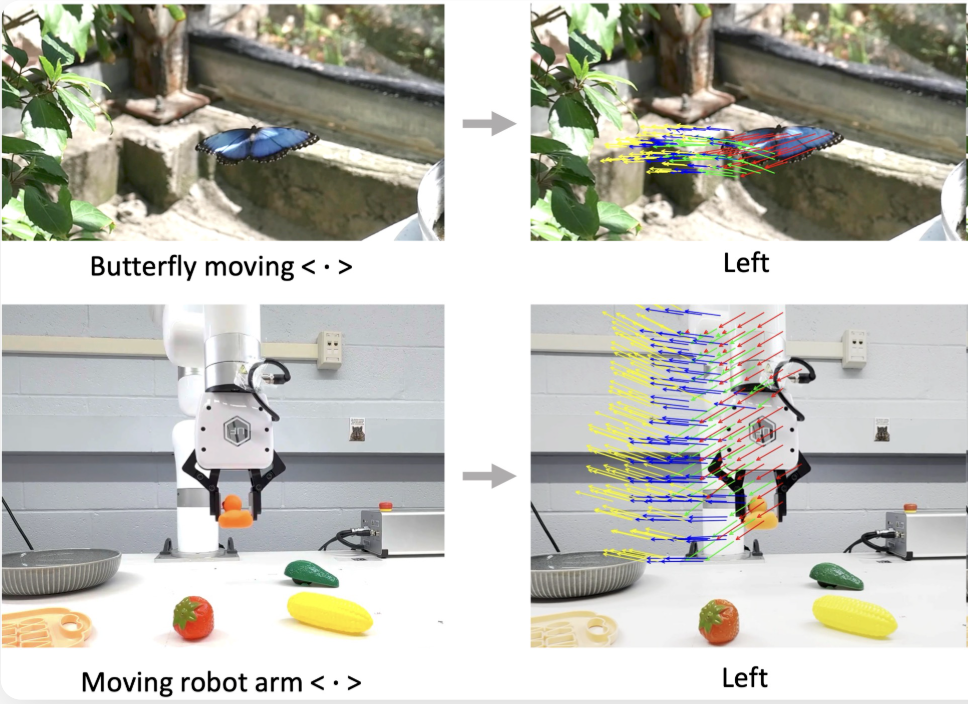

My research is centered on Computer Vision, with the ultimate goal of building multimodal AI systems that empower users through highly contextualized assistance. This requires a leap from passive observation to active partnership, beginning with event-aware perception to transform raw video into a structured understanding of human actions.

By bridging the gap between recognition and reasoning, my work seeks to infer human goals and intentions, allowing AI to move beyond simple labeling and toward anticipating a user’s needs in real-time. These capabilities are fundamental to the development of embodied agents capable of navigating dynamic environments and performing meaningful, supportive tasks. We pursue scaling these multimodal technologies to solve high-impact, practical problems that enhance how humans and machines interact.

news

| Jun 2026 | I am giving keynote and invited talks at CVPR 2026 workshops: CV4Smalls 2026, T4V 2026 (Transformers for Vision and Multimodal AI), VITA 2026 (Vision for Intelligent Task Assistants), and MAR 2026 (Multimodal Algorithmic Reasoning). Check out my slides here. |

|---|---|

| Apr 2026 | I am a keynote speaker at the ICLR 2026 Workshop on Multimodal Intelligence: Next Token Prediction & Beyond, and an invited spaker at the ICLR 2026 Workshop on Workshop on Navigating and Addressing Data Problems for Foundation Models. Check out my slides here. |

| Feb 2026 | I am a Lead Area Chair for ECCV 2026. |